This Workshop will solicit contributions on the recent progress of recognition, analysis, generation-synthesis and modelling of face, body, gesture, speech, audio, text and language while embracing the most advanced systems available for such in-the-wild (i.e., in unconstrained environments) analysis, and across modalities like face to voice. In parallel, this Workshop will solicit contributions towards building fair, explainable, trustworthy and privacy-aware models that perform well on all subgroups and improve in-the-wild generalisation.

Original high-quality contributions, in terms of databases, surveys, studies, foundation models, techniques and methodologies (either uni-modal or multi-modal; uni-task or multi-task ones) are solicited on -but are not limited to- the following topics:

facial expression (basic, compound or other) or micro-expression analysis

facial action unit detection

valence-arousal estimation

physiological-based (e.g.,EEG, EDA) affect analysis

face recognition, detection or tracking

body recognition, detection or tracking

gesture recognition or detection

pose estimation or tracking

activity recognition or tracking

lip reading and voice understanding

face and body characterization (e.g., behavioral understanding)

characteristic analysis (e.g., gait, age, gender, ethnicity recognition)

group understanding via social cues (e.g., kinship, non-blood relationships, personality)

video, action and event understanding

digital human modeling

characteristic analysis (e.g., gait, age, gender, ethnicity recognition)

violence detection

autonomous driving

domain adaptation, domain generalisation, few- or zero-shot learning for the above cases

fairness, explainability, interpretability, trustworthiness, privacy-awareness, bias mitigation and/or subgroup distribution shift analysis for the above cases

editing, manipulation, image-to-image translation, style mixing, interpolation, inversion and semantic diffusion for all afore mentioned cases

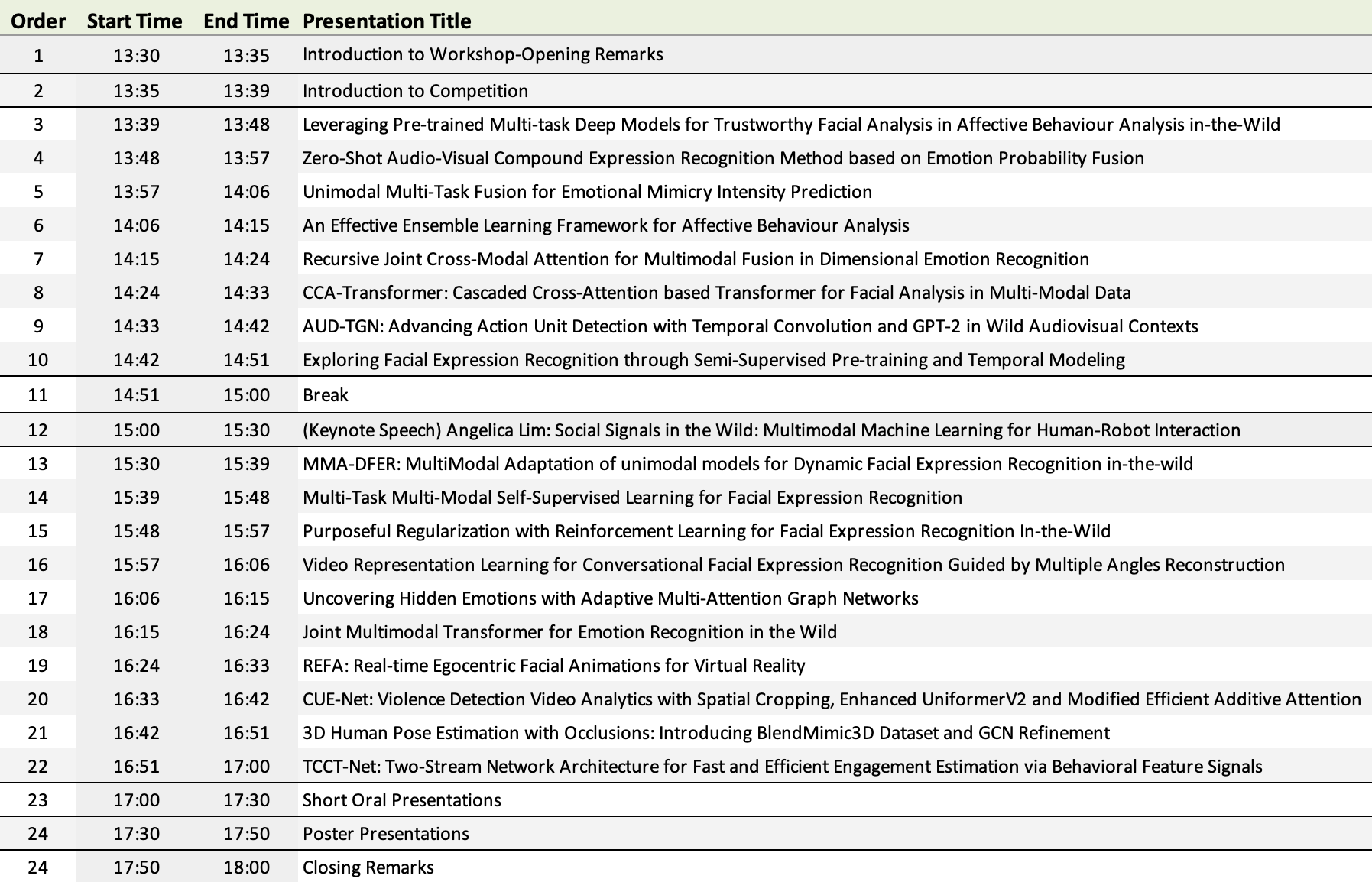

Workshop Important Dates

Paper Submission Deadline: 23:59:59 AoE (Anywhere on Earth) March 30, 2024

Review decisions sent to authors; Notification of acceptance: April 10, 2024

Camera ready version: April 14, 2024

Submission Information

The paper format should adhere to the paper submission guidelines for main CVPR 2024 proceedings style. Please have a look at the Submission Guidelines Section here.

We welcome full long paper submissions (between 5 and 8 pages, excluding references or supplementary materials; a paper submission should be at least 4 pages long to be considered for publication). All submissions must be anonymous and conform to the CVPR 2024 standards for double-blind review.

All papers should be submitted using this CMT website.

All accepted manuscripts will be part of CVPR 2024 conference proceedings.

At the day of the workshop, oral presentations will be conducted by authors who are attending in-person.